March 2026

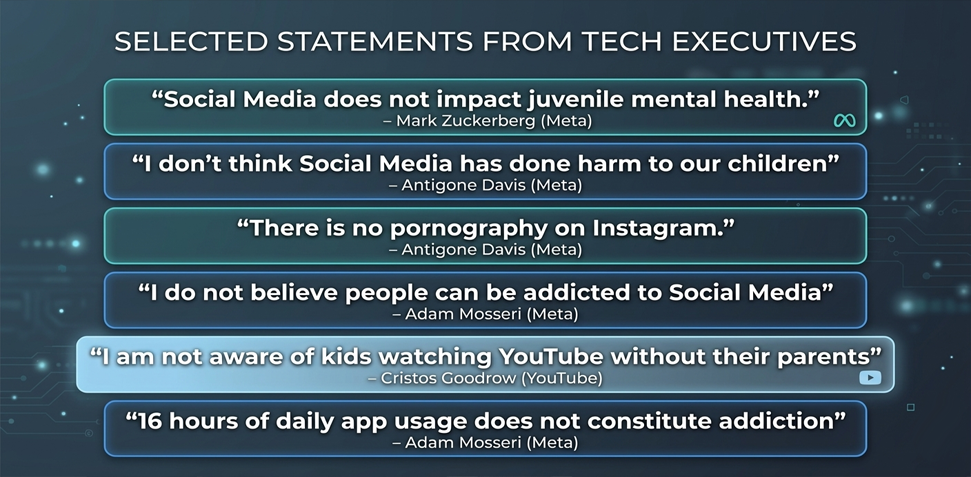

The results of recent trials against Meta Platforms are everywhere on our LinkedIn feeds. While some may be fatigued by hearing about them, we should not lose sight of their significance. Child cyber safety advocates have been calling for accountability for nearly two decades, often facing dismissals or threats when challenging the false claims made by Big Tech executives.

Since 2004, Mark Zuckerberg has led the social media landscape, exploiting gaps in rules and regulations while prioritising profit over user safety. Other platforms have followed a similar path. While some may credit these leaders as savvy entrepreneurs, the human cost of their inaction is undeniable as the risks to children, mental health, and families have been profound.

For years, Section 230 of the Communications Decency Act in the U.S. shielded these companies from liability, enabling platform design that maximised engagement with little consideration for harm. March 2026 has changed that narrative.

In the recent Los Angeles and New Mexico trials, for the first time, executives faced juries that could evaluate the evidence of harm without the protection of legal or financial influence.

The Los Angeles case, in particular, signaled a critical shift in how courts assess social media-related harm. Rather than focusing on individual content which Section 230 protects, the jury examined the design of the platform itself. Features such as infinite scroll, auto-play, and algorithmic recommendations were treated not as neutral tools, but as mechanisms deliberately engineered to maximise engagement. Evidence showed these features could exploit adolescent vulnerabilities and contribute to compulsive use.

Another key factor was failure to warn. Internal research presented during the trial demonstrated that Meta was aware of potential negative impacts on younger users. The case was not just about harm occurring, but about a company knowing or reasonably expected to know of risks and failing to mitigate them.

Ultimately, these trials highlight a broader shift, in that Social Media platforms are no longer seen as passive conduits of information but as engineered systems with predictable behavioural impacts.

Meta responded with the now-familiar statement:

“We respectfully disagree with the verdicts and will appeal. We work hard to keep people safe on our platforms. We remain confident in our record of protecting teens online.”

We have heard lies so often.

While Meta has indicated an appeal, these civil verdicts increase pressure on the company to implement meaningful changes. Continued inaction would not only expose users to known risks but also invite further legal, regulatory, and reputational scrutiny.

The next trial, scheduled for June, is expected to be even larger. Multiple U.S. school districts will argue that social media platforms have caused widespread disruption and harm in educational environments. These cases represent the next wave of accountability and may set the stage for systemic reform.

Civil cases like these are crucial in driving change. They signal to platforms, regulators, and the public that design choices have consequences and that accountability is possible, even against the largest tech companies. While civil liability remains the primary tool at this time, persistent failure to address known harms could escalate scrutiny and regulatory action in the years ahead.

This neglect may very well lead to the possibility of criminal litigation. This is something I truly hope will occur if in fact Big Tech continue to ignore the global demand for change. The question may well be, which State Prosecutor would have the courage to do that! For the first time ever we have had some lawyers who were courageous enough to take Meta on in a civil trial. Perhaps that courage will be contagious!

Regardless, the message is clear! The era of “business as usual” for Big Tech is over. Platforms must now take seriously the safety of the young users who power their networks, or face continued legal and societal consequences.

A line in the sand has finally been drawn and I am very excited.